I wrote nearly 2,000 articles for WorldChanging, and I am very happy to have them there. Nonetheless, some of the pieces I wrote are fundamental parts of my worldview, and it's useful to have them here, too.

"Open the Future," written in mid-2003, was originally scheduled to appear in the Whole Earth magazine. Unfortunately, that issue turned out to be the final, never actually published, appearance of the magazine. I posted the essay on WorldChanging in February, 2004. In retrospect, it's a bit wordy and solemn, and focuses too much on the "singularity" concept, but it still gets the core idea across: openness is our best defense.

Very soon, sooner than we may wish, we will see the onset of a process of social and technological transformation that will utterly reshape our lives -- a process that some have termed a "singularity." While some embrace this possibility, others fear its potential. Aggressive steps to deflect this wave of global transformation will not save us, and would likely make things worse. Powerful interests desire drastic technological change; powerful cultural forces drive it. The seemingly common-sense approach of limiting access to emerging technologies simply further concentrates power in the hands of a few, while leaving us ultimately no safer. If we want a future that benefits us all, we'll have to try something radically different.

Many argue that we are powerless in the face of such massive change. Much of the memetic baggage accompanying the singularity concept emphasizes the inevitability of technological change and our utter impotence in confronting it. Some proponents would have you believe that the universe itself is structured to produce a singularity, so that resistance is not simply futile, it's ridiculous. Fortunately, they say, the post-singularity era will be one of abundance and freedom, an opportunity for all that is possible to become real. Those who try to divert or prevent a singularity aren't just fighting the inevitable, they're trying to deny themselves Heaven.

Others who take the idea of a singularity seriously are terrified. For many deep ecologists, religious fundamentalists, and other "rebels against the future," the technologies underlying a singularity -- artificial intelligence, nanotechnology, and especially biotechnology -- are inherently cataclysmic and should be controlled (at least) or eliminated (at best), as soon as possible. The potential benefits (long healthy lives, the end of material scarcity) do not outweigh the potential drawbacks (the possible destruction of humanity). Although they do not claim that resistance is useless, they too presume that we are merely victims of momentous change.

Proponents and opponents alike both forget that technological development isn't a law of the universe, nor are we slaves to its relentless power. The future doesn't just happen to us. We can -- and should -- choose the future we want, and work to make it so.

Direct attempts to prevent a singularity are mistaken, even dangerous. History has shown time and again that well-meaning efforts to control the creation of powerful technologies serve only to drive their development underground into the hands of secretive government agencies, sophisticated political movements, and global-scale corporations, few of whom have demonstrated a consistent willingness to act in the best interests of the planet as a whole. These organizations -- whether the National Security Agency, Aum Shinri Kyo, or Monsanto -- act without concern for popular oversight, discussion, or guidance. When the process is secret and the goal is power, the public is the victim. The world is not a safer place with treaty-defying surreptitious bioweapons labs and hidden nuclear proliferation. Those who would save humanity by restricting transformative technologies to a power elite make a tragic mistake.

But centralized control isn't our only option. In April of 2000, I had an opportunity to debate Bill Joy, the Sun Microsystems engineer and author of the provocative Wired article, "Why The Future Doesn't Need Us." He argued that these transformative technologies could doom civilization, and we need to do everything possible to prevent such a disaster. He called for a top-down, international regime controlling the development of what he termed "knowledge-enabled" technologies like AI and nanotech, drawing explicit parallels between these emerging systems and nuclear weapon technology. I strongly disagreed. Rather than looking to some rusty models of world order for solutions, I suggested we seek a more dynamic, if counter-intuitive approach: openness.

This is no longer the Cold War world of massive military-industrial complexes girding for battle; this is a world where even moderately sophisticated groups can and do engage in cutting-edge development. Those who wish to do harm will get their hands on these technologies, no matter how much we try to restrict access. But if the dangerous uses are "knowledge-enabled," so too are the defenses. Opening the books on emerging technologies, making the information about how they work widely available and easily accessible, in turn creates the possibility of a global defense against accidents or the inevitable depredations of a few. Openness speaks to our long traditions of democracy, free expression, and the scientific method, even as it harnesses one of the newest and best forces in our culture: the power of networks and the distributed-collaboration tools they evolve.

Broad access to singularity-related tools and knowledge would help millions of people examine and analyze emerging information, nano- and biotechnologies, looking for errors and flaws that could lead to dangerous or unintended results. This concept has precedent: it already works in the world of software, with the "free software" or "open source" movement. A multitude of developers, each interested in making sure the software is as reliable and secure as possible, do a demonstrably better job at making hard-to-attack software than an office park's worth of programmers whose main concerns are market share, liability, and maintaining trade secrets.

Even non-programmers can help out with distributed efforts. The emerging world of highly-distributed network technologies (so-called "grid" or "swarm" systems) make it possible for nearly anyone to become a part-time researcher or analyst. The "Folding@Home" project, for example, enlists the aid of tens of thousands of computer users from around the world to investigate intricate models of protein folding, critical for the development of effective treatments of complex diseases. Anyone on the Internet can participate: just download the software, and boom, you're part of the search for a cure. It turns out that thousands of cheap, consumer PCs running the analysis as a background task can process the information far faster than expensive high-end mainframes or even supercomputers.

The more people participate, even in small ways, the better we get at building up our knowledge and defenses. And this openness has another, not insubstantial, benefit: transparency. It is far more difficult to obscure the implications of new technologies (or, conversely, to oversell their possibilities) when people around the world can read the plans. Monopolies are less likely to form when everyone understands the products companies make. Even cheaters and criminals have a tougher time, as any system that gets used can be checked against known archives of source code.

Opponents of this idea will claim that terrorists and dictators will take advantage of this permissive information sharing to create weapons. They will -- but this also happens now, under the old model of global restrictions and control, as has become depressingly clear. There will always be those few who wish to do others harm. But with an open approach, you also get millions of people who know how dangerous technologies work and are committed to helping to detect, defend and respond. That these are "knowledge-enabled" technologies means that knowledge also enables their control; knowledge, in turn, grows faster as it becomes more widespread.

These technologies may have the potential to be as dangerous as nuclear weapons, but they emerge like computer viruses. General ignorance of how software works does not stop viruses from spreading. Quite the opposite - it gives a knowledgeable few the power to wreak havoc in the lives of everyone else, and lets companies that keep the information private a chance to deny that virus-enabling defects exist. Letting everyone read the source code may help a few minor sociopaths develop new viruses, but also enables a multitude of users to find and repair flaws, stopping many viruses dead in their tracks. The same applies to knowledge-enabled transformative technologies: when (not if) a terrorist figures out how to do bad things with biotechnology, a swarm of people around the world looking for defenses will make us safer far faster than would an official bureaucracy worried about being blamed.

Consider: in early 2003, the U.S. Environmental Protection Agency announced that it was upgrading its nationwide system of air-quality monitoring stations across to detect certain bioterror pathogens. Now, analyzing air samples quickly requires enormous processing time; this limits both the number of monitors and the variety of germs they can detect. If one of the monitors picks up evidence of a biological agent in the air, under current conditions it will take time to analyze and confirm the finding, and then more time to determine what treatments and preventive measures -- if any -- will be effective.

Imagine, conversely, a more open system's response. EPA monitoring devices could send data not to a handful of overloaded supercomputers but to millions of PCs around the nation -- a sort of "CivilDefense@Home" project. Working faster, this distributed network could spot a wider array of potential threats. When a potential threat is found, the swarm of computers could quickly confirm the evidence, and any and all biotech researchers with the time and resources to work on countermeasures could download the data to see what we're up against. Even if there are duplicated efforts, the wider array of knowledge and experience brought to bear on the problem has a greater chance of resulting in a better solution faster - and of course answers could still be sought in the restricted labs of the CDC, FBI and USAMRIID. The more participants, the better.

Both the threat and the response could happen with today's technology. Tomorrow's developments will only produce more threats and challenges more quickly. Do we really think government bureaucracies can handle them alone?

A move to a society that embraced open technology access is possible, but it will take time. This is not a tragedy; even half-measures are an improvement over a total clamp down. The basic logic of an open approach -- we're safer when more of us can see what's going on -- will still help as we take cautious steps forward. A number of small measures suggest themselves as good places to start:

• Re-open academic discourse. Post-9/11 security fears have led to restrictions on what scholarly researchers are allowed to discuss or publish. Our ability to understand and react to significant events -- disasters or otherwise -- is hampered by these controls.

• Public institutions should use open software. The philosophy of open source software matches our traditions of free discourse. Moreover, the public should have the right to see the code underlying government activities. In some cases, such as with word processing software, the public value may be slight; in other cases, such as with electronic voting software, the public value couldn't be greater. That nearly all open source software is free means public institutions will also save serious money over time.

• Research and development paid for by the public should be placed in the public domain. Proprietary interests should not be able to use of government research grants for private gain. We all should benefit from research done with our resources.

• Our founding fathers intended intellectual property (IP) laws to promote invention, but IP laws today are used to shore up cultural monopolies. Copyright and other IP restrictions should reward innovation, not provide hundred-plus year financial windfalls for companies long after the innovators have died.

• Those who currently develop these powerful technologies should open their research to the public. Already there is a growing "open source biotechnology" movement, and key figures in the world of nanotechnology and artificial intelligence have spoken of their desire to keep their work open. This is, in many ways, the critical step. It could become a virtuous cycle, with early successes in turn bringing in more participants, more research, and greater influence over policy.

While these steps would not result in the fully-open world that would be our best hope for a safe future, they would let in much-needed cracks of light.

Fortunately, the forces of openness are gaining a greater voice around the world. The notion that self-assembling, bottom-up networks are powerful methods of adapting to ever-changing conditions has moved from the realm of academic theory into the toolbox of management consultants, military planners, and free-floating swarms of teenagers alike. Increasingly, people are coming to realize that openness is a key engine for innovation.

Centralized, hierarchical control is an effective management technique in a world of slow change and limited information -- the world in which Henry Ford built the model T, say. In such a world, when tomorrow will look pretty much the same as today, that's a reasonable system. In a world where each tomorrow could see fundamental transformation of how we work, communicate, and live, it's a fatal mistake.

A fully open, fully distributed system won't spring forth easily or quickly. Nor will the path of a singularity be smooth. There is a feedback loop between society and technology -- changes in one drive changes in the other, and vice-versa -- but there is also a pace disconnect between them. Tools change faster than culture, and there is a tension between the desire to build new devices and systems and the need for the existing technologies to be integrated into people's lives. As a singularity gets closer, this disconnect will worsen; it's not just that society lags technology, it's that technology keeps lapping society, which is unable to settle on new paradigms before having to start anew. People trying to live in fairly modern ways live shoulder-by-jowl with people desperately trying to hang on to well-understood traditions, and both are still confronted by surprising new concepts and systems.

Change lags and lurches, as rapid improvements and innovations in technology are haphazardly integrated into other aspects of our culture(s). Technologies cross-breed, and advances in one realm spur a flurry of breakthroughs in others. These new discoveries and inventions, in turn, open up new worlds of opportunities and innovation.

If there is a key driving force pushing towards a singularity, it's international competition for power. This ongoing struggle for power and security is why, in my view, attempts to prevent a singularity simply by international fiat are doomed. The potential capabilities of transformative technologies are simply staggering. No nation will risk falling behind its competitors, regardless of treaties or UN resolutions banning intelligent machines or molecular-scale tools. The uncontrolled global transformation these technologies may spark is, in strategic terms, far less of a threat than an opponent having a decided advantage in their development -- a "singularity gap," if you will. The "missile gap" that drove the early days of the nuclear arms race would pale in comparison.

Technology doesn't make the singularity inevitable; the need for power does. One of the great lessons of the 20th century was that openness -- democracy, free expression, and transparency -- is the surest way to rein in the worst excesses of power, and to spread its benefits to the greatest number of us. Time and again, we have learned that the best way to get decisions that serve us all is to let us all decide.

The greatest danger we face comes not from a singularity itself, but from those who wish us to be impotent at its arrival, those who wish to keep its power for themselves, and those who would hide its secrets from the public. Those who see the possibility of a revolutionary future of abundance and freedom are right, as are those who fear the possibility of catastrophe and extinction. But where they are both wrong is in believing that the future is out of our hands, and should be kept out of our hands. We need an open singularity, one that we can all be a part of. That kind of future is within our reach; we need to take hold of it now.

I love to watch the future take shape.

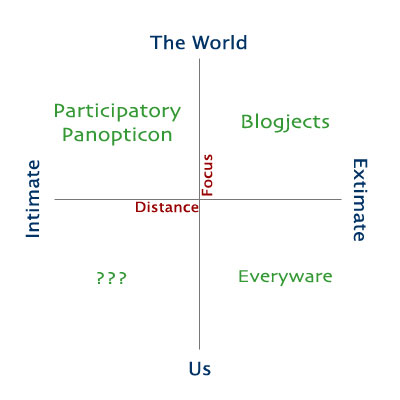

I love to watch the future take shape., subtitled "the dawning age of ubiquitous computing." Greenfield's everyware model is in some respects the polar opposite of the participatory panopticon: rather than intimate devices watching the world, Everyware posits a world of extimate devices watching each of us.

I've been fascinated for many years by the emergence of virtual worlds. Their attractiveness is obvious to anyone who has read a work of fiction and imagined themselves in that world, either alongside the heroes or off exploring new spaces. Paper and dice role-playing games (such as D&D or

I've been fascinated for many years by the emergence of virtual worlds. Their attractiveness is obvious to anyone who has read a work of fiction and imagined themselves in that world, either alongside the heroes or off exploring new spaces. Paper and dice role-playing games (such as D&D or  The future is not written in stone, but neither is it unbounded. Our actions, our choices shape the options we'll have in the days and years to come. We can, with all too little difficulty, make decisions that call into being an inescapable chain of events. But if we try, we can also make decisions that expand our opportunities, and push out the boundaries of tomorrow.

The future is not written in stone, but neither is it unbounded. Our actions, our choices shape the options we'll have in the days and years to come. We can, with all too little difficulty, make decisions that call into being an inescapable chain of events. But if we try, we can also make decisions that expand our opportunities, and push out the boundaries of tomorrow.